MVM for neural network accelerators. (a) Sketch of a fully connected... | Download Scientific Diagram

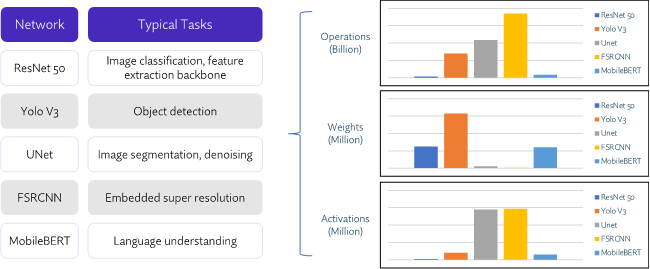

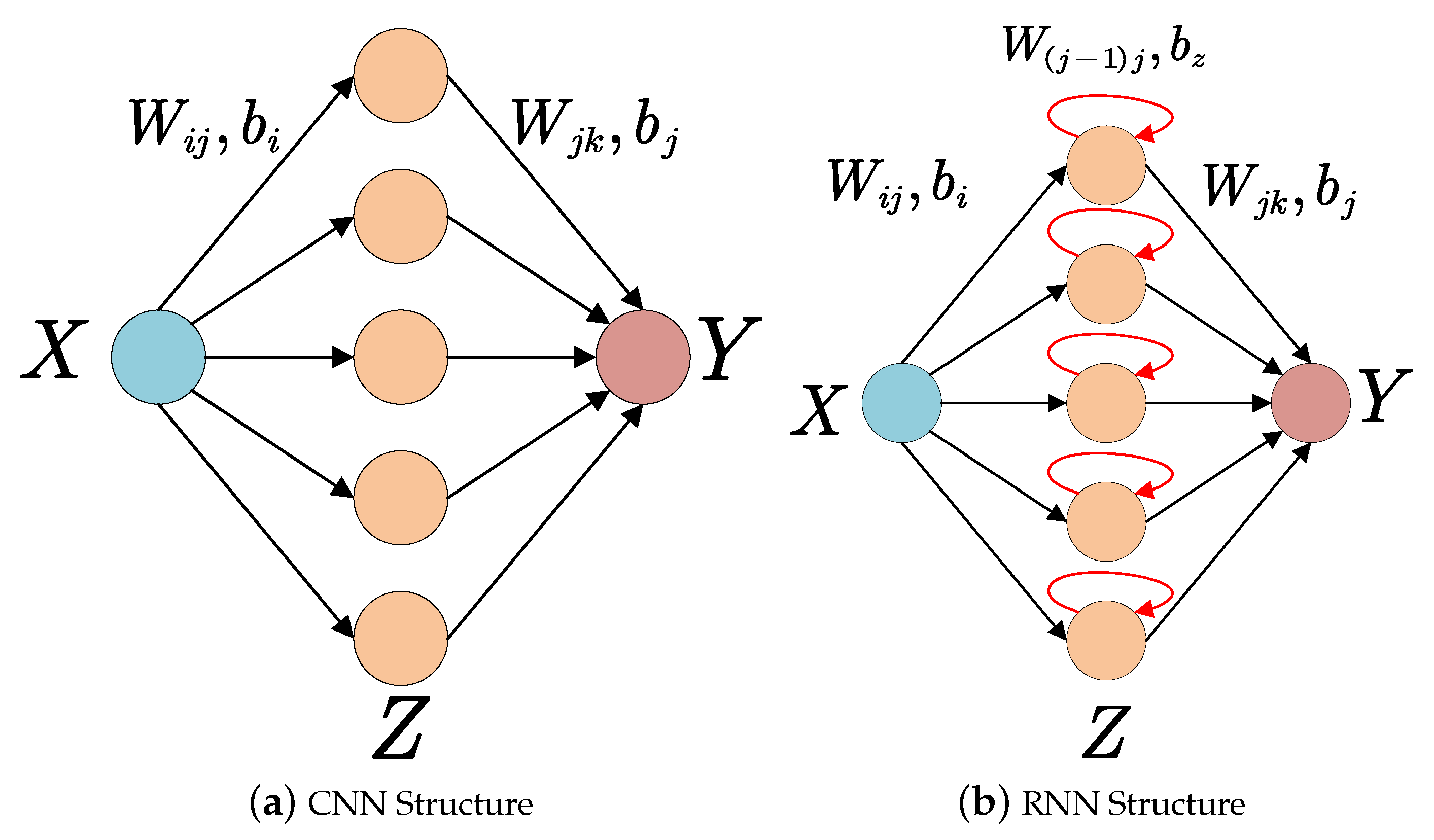

Electronics | Free Full-Text | Accelerating Neural Network Inference on FPGA-Based Platforms—A Survey

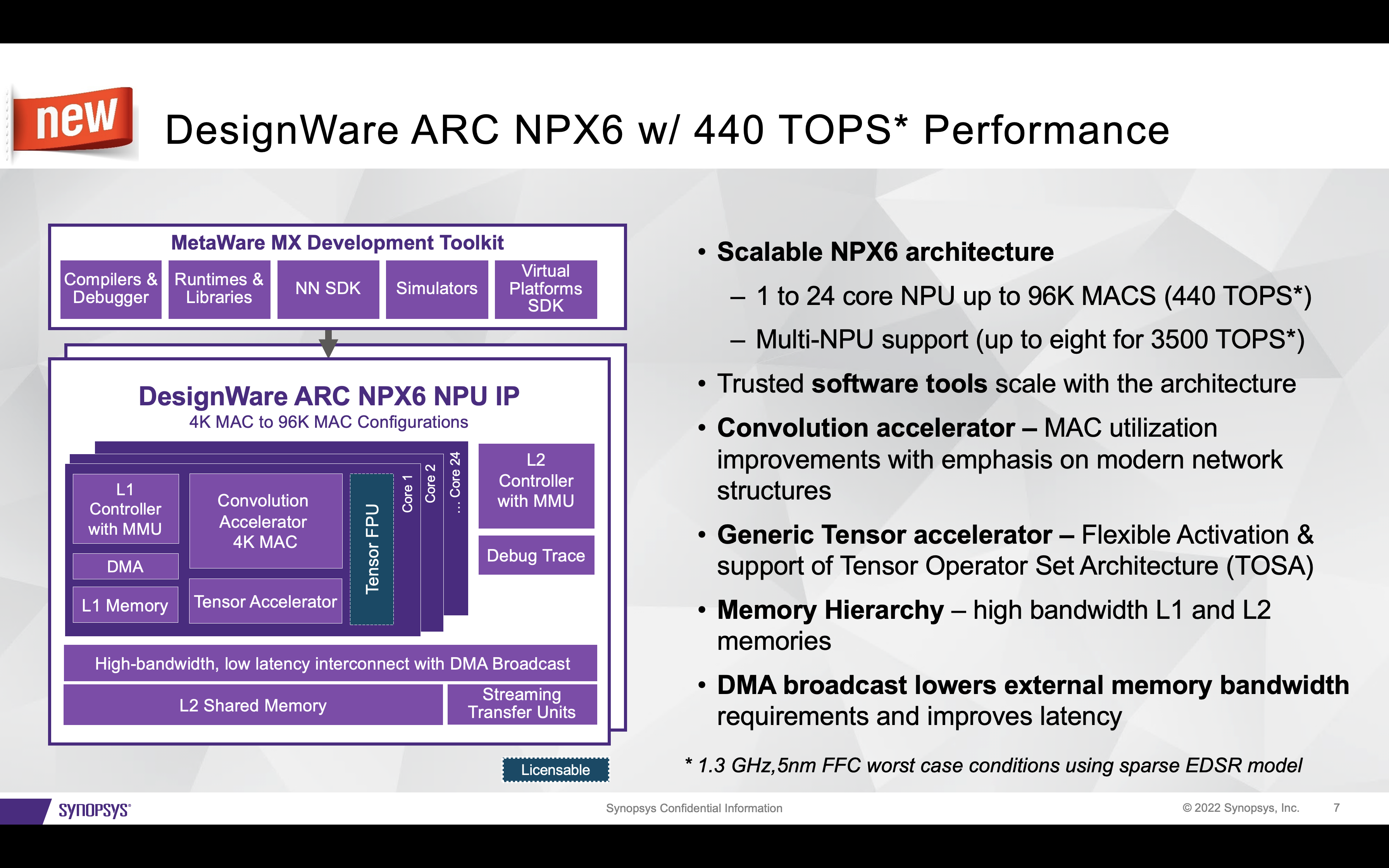

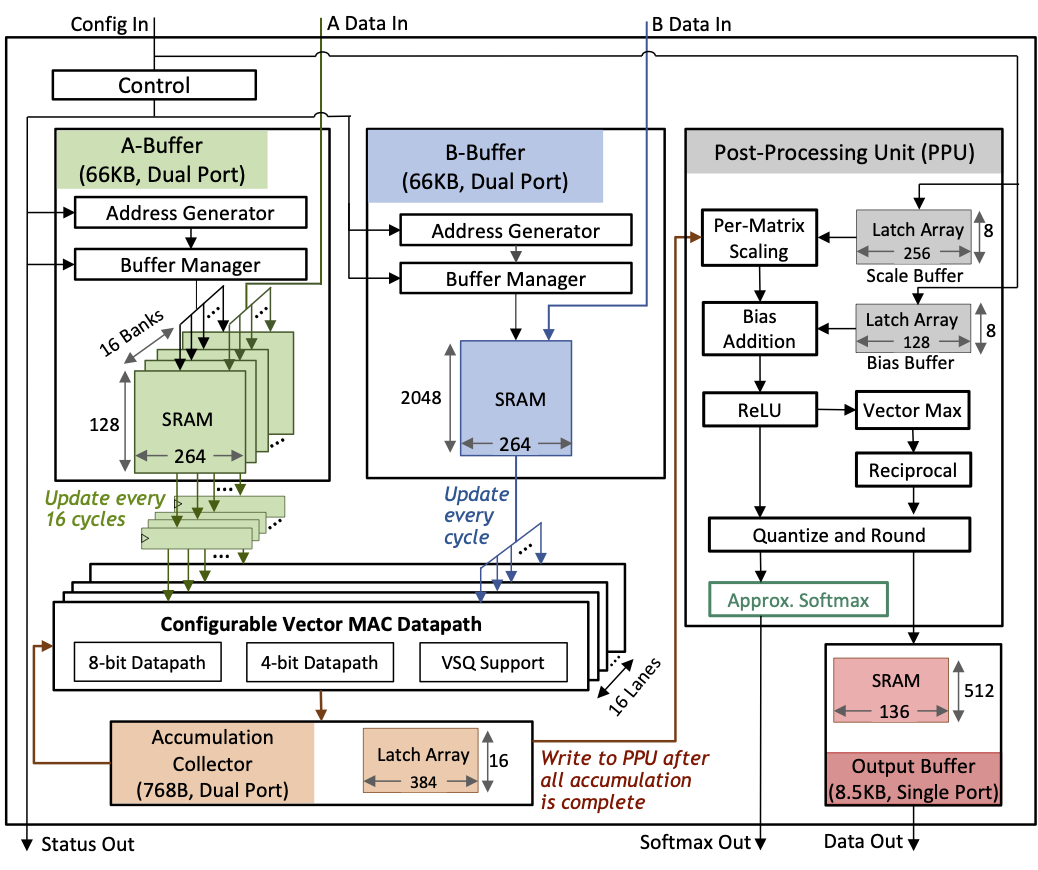

A 17–95.6 TOPS/W Deep Learning Inference Accelerator with Per-Vector Scaled 4-bit Quantization for Transformers in 5nm | Research

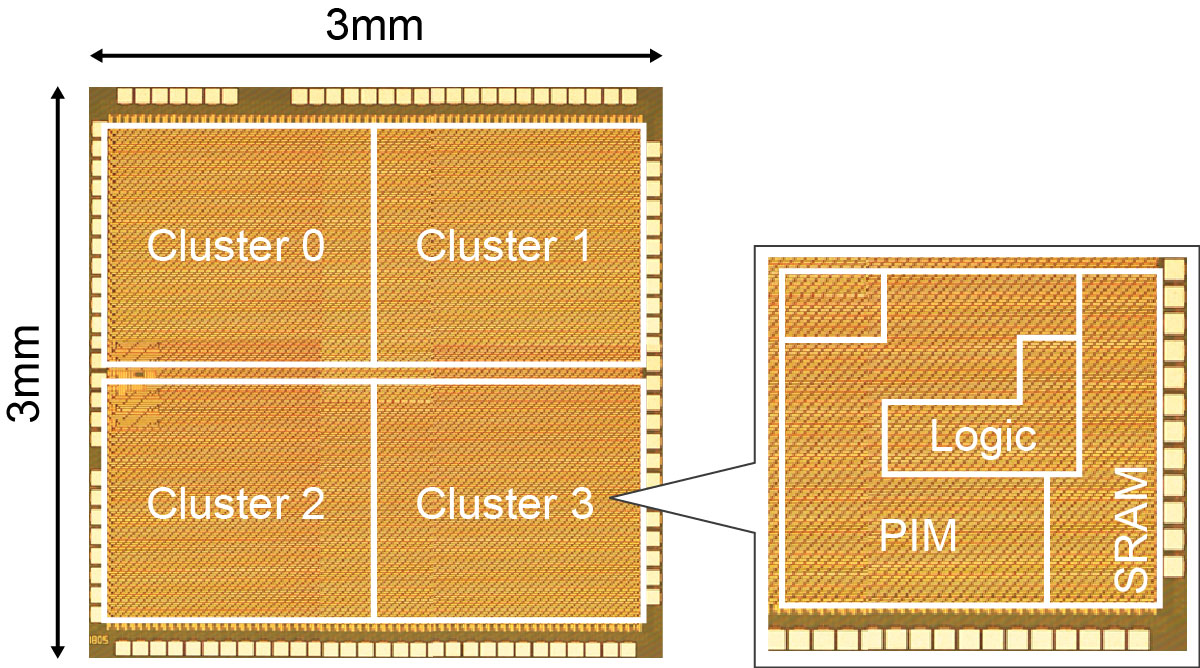

![VLSI 2018] A 4M Synapses integrated Analog ReRAM based 66.5 TOPS/W Neural- Network Processor with Cell Current Controlled Writing and Flexible Network Architecture VLSI 2018] A 4M Synapses integrated Analog ReRAM based 66.5 TOPS/W Neural- Network Processor with Cell Current Controlled Writing and Flexible Network Architecture](https://t1.daumcdn.net/cfile/tistory/993DBA3E5BAF92CE38)

VLSI 2018] A 4M Synapses integrated Analog ReRAM based 66.5 TOPS/W Neural- Network Processor with Cell Current Controlled Writing and Flexible Network Architecture

Mipsology Zebra on Xilinx FPGA Beats GPUs, ASICs for ML Inference Efficiency - Embedded Computing Design

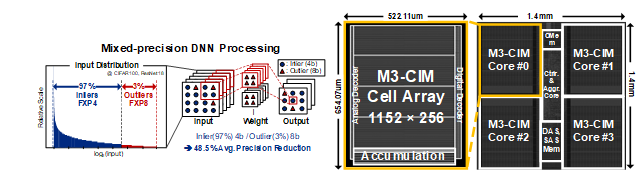

A 161.6 TOPS/W Mixed-mode Computing-in-Memory Processor for Energy-Efficient Mixed-Precision Deep Neural Networks (유회준교수 연구실) - KAIST 전기 및 전자공학부

![PDF] A 0.3–2.6 TOPS/W precision-scalable processor for real-time large-scale ConvNets | Semantic Scholar PDF] A 0.3–2.6 TOPS/W precision-scalable processor for real-time large-scale ConvNets | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/f2dd73ae127c5ee3713a92e1057eddea92fbf207/2-Figure1-1.png)

PDF] A 0.3–2.6 TOPS/W precision-scalable processor for real-time large-scale ConvNets | Semantic Scholar

![PDF] A 3.43TOPS/W 48.9pJ/pixel 50.1nJ/classification 512 analog neuron sparse coding neural network with on-chip learning and classification in 40nm CMOS | Semantic Scholar PDF] A 3.43TOPS/W 48.9pJ/pixel 50.1nJ/classification 512 analog neuron sparse coding neural network with on-chip learning and classification in 40nm CMOS | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/a2e283532b71e9b6af7addb3b3f4f4a1af6e0fb4/2-Figure1-1.png)

PDF] A 3.43TOPS/W 48.9pJ/pixel 50.1nJ/classification 512 analog neuron sparse coding neural network with on-chip learning and classification in 40nm CMOS | Semantic Scholar

![PDF] A 0.32–128 TOPS, Scalable Multi-Chip-Module-Based Deep Neural Network Inference Accelerator With Ground-Referenced Signaling in 16 nm | Semantic Scholar PDF] A 0.32–128 TOPS, Scalable Multi-Chip-Module-Based Deep Neural Network Inference Accelerator With Ground-Referenced Signaling in 16 nm | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/99d272ce0028fc67d6b6165633b5fb767f003a3d/1-Figure1-1.png)

PDF] A 0.32–128 TOPS, Scalable Multi-Chip-Module-Based Deep Neural Network Inference Accelerator With Ground-Referenced Signaling in 16 nm | Semantic Scholar